AI Networking

Broadberry is a Nvidia Elite partner fully accredited to build AI Infrastructure including AI PODs and AI Factories designed specifically and tailored to the customers workloads.

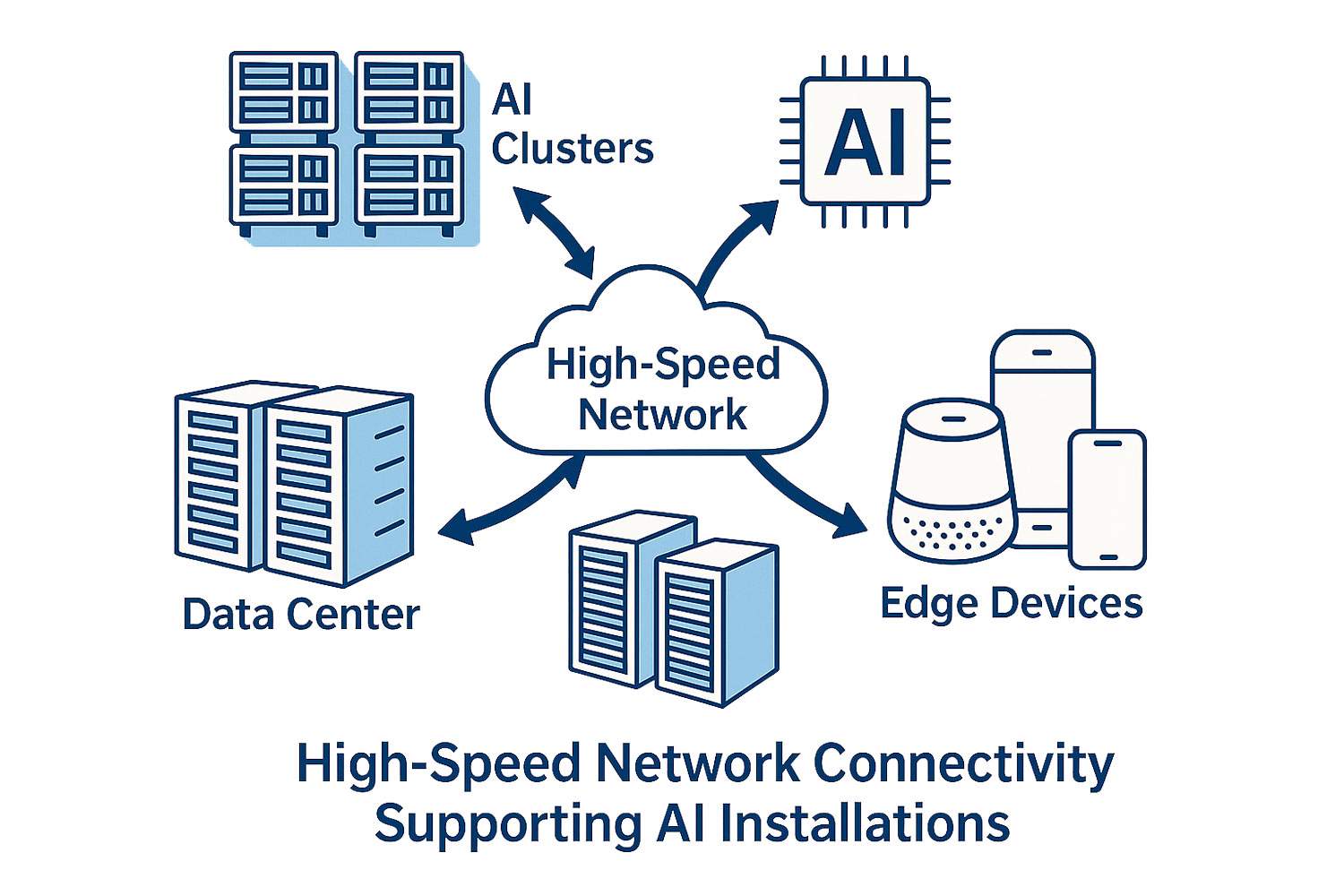

Artificial Intelligence workloads place extraordinary demands on the underlying IT infrastructure, far beyond those of traditional enterprise applications. Modern AI systems must ingest, process, and move enormous volumes of data at unprecedented speeds, often across clusters of high performance servers equipped with GPUs, TPUs, or other accelerators. As these workloads scale in complexity and size, the network becomes a central pillar of performance rather than a supporting component.

In this environment, network throughput, latency, and reliability directly influence how quickly models can be trained, how efficiently data can be shared between nodes, and how smoothly real time inference can be delivered. Even the most powerful compute hardware cannot operate at full potential if the network becomes a bottleneck. High speed connectivity ensures that data flows freely between storage, compute, and edge environments, enabling AI systems to operate cohesively as a unified platform.

For this reason, high speed networking is no longer a luxury or an optional enhancement, it is a foundational requirement for any serious AI deployment. Whether an organisation is training large scale deep learning models, running distributed inference pipelines, or integrating cloud and edge environments, advanced networking capabilities are essential to achieving predictable, scalable, and efficient performance.

Below are the key reasons why high performance networking is indispensable in modern AI infrastructure.

AI systems routinely process vast amounts of data, including high resolution images, video streams, sensor outputs, telemetry, and application logs. These datasets must move quickly and reliably across the infrastructure to keep workflows running smoothly.

High speed networking enables:

In short, the faster the network, the more efficiently data can flow, directly impacting training times, throughput, and overall productivity.

Modern AI workloads rarely run on a single machine. Instead, they rely on clusters of GPUs, TPUs, or other accelerators spread across multiple servers. These distributed systems must communicate constantly and at extremely high speeds.

High speed connectivity provides:

Without a high bandwidth, low latency network, distributed AI systems simply cannot operate effectively.

In industries where milliseconds matter - such as autonomous vehicles, industrial automation, IoT analytics, healthcare diagnostics, and financial trading - network performance directly affects outcomes.

High speed networking ensures:

For these applications, slow or unreliable connectivity is simply not an option.

AI deployments increasingly span hybrid environments, combining on premises infrastructure, cloud platforms, and edge devices. High speed connectivity is the glue that holds these ecosystems together.

It enables:

This level of integration is only possible with robust, high bandwidth networking.

AI models are growing exponentially in size and complexity, with many now containing billions - or even trillions - of parameters. As these models scale, so do the demands placed on the network.

To support next generation AI workloads, organisations increasingly rely on:

Without these technologies, scaling AI infrastructure becomes inefficient, costly, and ultimately unsustainable.

NVIDIA Spectrum-2 based 25GbE/100GbE 1U Open Ethernet switch with Cumulus Linux, 48 SFP28 ports and 12 QSFP28

NVIDIA Spectrum-2 based 25GbE/100GbE 1U Open Ethernet switch with Cumulus Linux, 48 SFP28 ports and 12 QSFP28

NVIDIA Spectrum-3 based 100GbE 2U Open Ethernet switch with Cumulus Linux, 64 QSFP28 ports, 2 Power Supplies (AC), x86 CPU, standard depth, C2P airflow, Rail Kit

NVIDIA Spectrum-3 based 100GbE 2U Open Ethernet switch with Cumulus Linux, 64 QSFP28 ports, 2 Power Supplies (AC), x86 CPU, standard depth, P2C airflow, Rail Kit

NVIDIA Quantum 2 based NDR InfiniBand Switch, 64 NDR ports, 32 OSFP ports, 2 Power Supplies (AC), Standard depth, Unmanaged, P2C airflow, Rail Kit

NVIDIA Quantum 2 based NDR InfiniBand Switch, 64 NDR ports, 32 OSFP ports, 2 Power Supplies (AC), Standard depth, Unmanaged, P2C airflow, Rail Kit

NVIDIA Quantum 2 based NDR InfiniBand Switch, 64 NDR ports, 32 OSFP ports, 2 Power Supplies (AC), Standard depth, Managed, C2P airflow, Rail Kit

NVIDIA Quantum 2 based NDR InfiniBand Switch, 64 NDR ports, 32 OSFP ports, 2 Power Supplies (AC), Standard depth, Managed, P2C airflow, Rail Kit

NVIDIA Spectrum-3 based 400GbE 1U Open Ethernet Switch with Cumulus Linux, 32 QSFPDD ports, 2 Power Supplies (AC), x86 CPU, standard depth, C2P airflow, Rail Kit

NVIDIA Spectrum-3 based 400GbE 1U Open Ethernet Switch with Cumulus Linux, 32 QSFPDD ports, 2 Power Supplies (AC), x86 CPU, standard depth, P2C airflow, Rail Kit

NVIDIA Spectrum-4 based 400GbE 2U Open Ethernet switch with Cumulus Linux Authentication, 64 QSFP56-DD ports and 2 SFP28 ports, 2 power supplies (AC), x86 CPU, Secure-boot, standard depth, Power-to-Connector airflow, Tool-less Rail Kit

NVIDIA Spectrum-4 based 400GbE 2U Open Ethernet switch with Cumulus Linux Authentication, 64 QSFP56-DD ports and 2 SFP28 ports, 2 power supplies (AC), x86 CPU, Secure-boot, standard depth, Connector-to-Power airflow, Tool-less Rail Kit

NVIDIA Spectrum-4 based 800GbE 2U Open Ethernet switch with Cumulus Linux Authentication, 64 OSFP ports and 1 SFP28 port, MGX Mount with Busbar, x86 CPU, Secure-boot, standard depth, Connector-to-Power Airflow, Tool-less Rail Kit

NVIDIA Spectrum-4 based 800GbE 2U Open Ethernet switch with Cumulus Linux Authentication, 64 OSFP ports and 2 SFP28 ports, 4 AC PSUs, Secure-boot, standard depth, Connector-to-Power Airflow, Tool-less Rail Kit

Extensive Testing

Extensive TestingBefore leaving our build and configuration facility, all of our server and storage solutions undergo an extensive 48 hour testing procedure. This, along with the high quality industry leading components ensures all of our systems meet the strictest quality guidelines.

Customization Service

Customization ServiceOur main objective is to offer great value, high quality server and storage solutions, we understand that every company has different requirements and as such are able to offer a complete customization service to provide server and storage solutions that meet your individual needs.

We have established ourselves as one of the biggest storage providers in the US, and since 1989 been trusted as the preferred supplier of server and storage solutions to some of the world's biggest brands, including: